How I Actually Build Software With AI Agents: The Honest Version

It's not magic. Agents get sandboxed. Specs get misunderstood. The planning phase takes longer than the coding, but compound engineering with AI agents is genuinely changing how I ship. Here's the real workflow.

How I Actually Build Software With AI Agents: The Honest Version

I build software with AI agents. It’s messy and imperfect. It also works.

If you’ve read the polished version of this workflow elsewhere, you might think I whisper a feature idea into Telegram and watch it materialise on a staging URL twenty minutes later. That’s not what happens. The reality involves sandboxing failures, misunderstood specs, and iteration loops that go five to fifteen rounds before anything ships.

But here’s the thing: it’s still faster than the alternative. One technical founder with this process beats waiting six weeks for an agency that’ll hand you something you didn’t ask for anyway.

Let me show you what actually happens.

Phase 1: Planning

The philosophy behind this comes from compound engineering, an AI-native approach to software development that treats brainstorming, planning, building, and reviewing as a continuous loop rather than a waterfall. Each cycle compounds on the last. The spec gets sharper. The agents get better context. The output improves.

The biggest misconception about AI-assisted development is that the coding is the hard part. It isn’t. The hard part is knowing what to build in the first place.

I run planning sessions in Claude Code. Not typing one-liners. Actual collaborative brainstorming. I bring a problem, a half-formed idea, or a user pain point, and we iterate. Brainstorm → plan → spec. This isn’t “tell the AI what to build.” It’s thinking out loud with a collaborator that remembers everything, challenges assumptions, and helps me see around corners.

This is compound engineering: each session builds on the last. The context accumulates. The thinking gets sharper. By the time I’ve got a spec, I’ve usually spent more hours in this phase than I will on the entire build.

The output isn’t a ticket. It’s a detailed specification: acceptance criteria, out-of-scope items, dependencies, edge cases, and the user journey. The spec matters more than the code. A good spec with mediocre execution ships. A bad spec with perfect execution ships garbage faster.

Phase 2: Issues

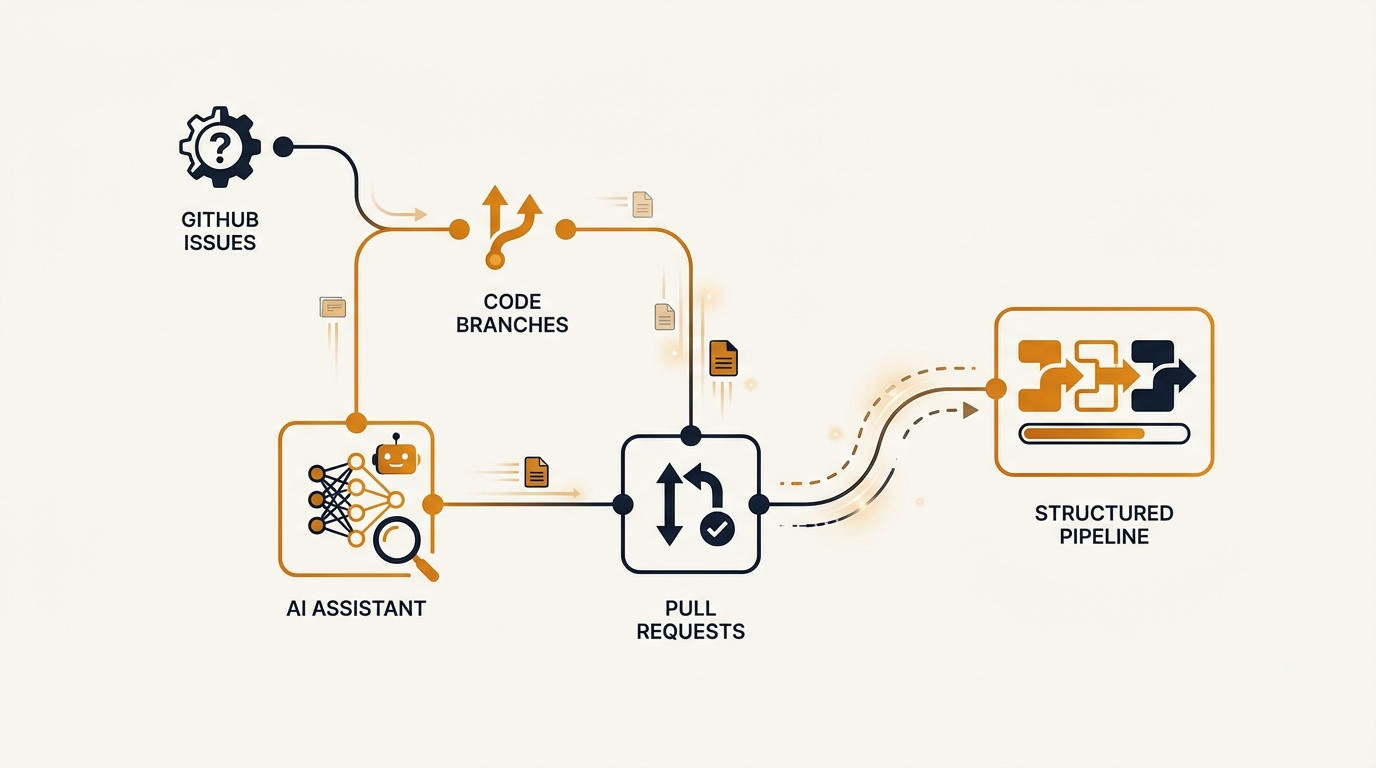

Once the spec is solid, it becomes a GitHub Issue. Not a vague title. The full specification, copied in, with checkboxes for acceptance criteria.

The issue goes onto a GitHub Projects kanban board: Todo → Agent → In Progress → Review → Done.

Cass, my orchestrator agent running on OpenClaw, hands the issue off to Forge, my development agent. Forge reads the issue spec, spins up an isolated instance of Claude Code, and gets to work. As it progresses, Forge manages the GitHub Project board updates itself, moving the task through “In Progress” to “Review.” When it’s done, it hands back either a PR or a question to Cass, who then comes to me via Telegram with the result. This exists precisely because without it, nothing would get tracked properly.

Phase 3: Building

Here’s where the magic supposedly happens. It doesn’t. What happens is competent execution of a good spec, interrupted regularly by reality.

Cass orchestrates Forge to run Claude Code inside the repository. The code harness for each project (CLAUDE.md plus custom skills like taste.md, premium.md, no-shortcuts.md) sets the quality bar. These aren’t decorative. They’re enforced constraints that the coding agent reads before writing a single line. Forge manages this process: it spins up an isolated Claude Code instance, points it at the repo with the harness loaded, and oversees the execution.

Forge spins up a Claude Code instance that writes the code and raises pull requests. I host most of my apps and products on Railway, which auto-deploys a preview URL for every PR branch. That means every time Forge pushes code, I get a live preview I can check on my phone before anything touches production.

Sounds smooth? It isn’t. Forge gets sandboxed regularly. The agent environment loses access to the repository, or hits a permission boundary. When that happens, Cass has to step in and do the work directly, or I intervene. It’s a system with failure modes we’ve learned to handle.

Phase 4: Review and Iteration

I review on the Railway preview URL. Mobile first, then desktop. I test the user flow, not the code diff.

Part of every spec is robust automated tests, so by the time I’m looking at the preview, the basics are already passing. My first review is always the output: does this work the way a user would expect?

Periodically I’ll go into the codebase with Claude and have it document the code. That’s where I spot drift: places where the implementation has quietly diverged from the original intent, or where the AI has misunderstood a concept. I feed those corrections back into the code harness, which keeps the overall project concept fresh and aligned between me and the agents. It’s a calibration loop. Without it, the codebase slowly drifts from what you actually wanted.

Feedback goes back via Telegram. Specific, not vague. “The button alignment is off on iPhone SE” or “The form validation doesn’t catch empty strings.” Cass relays this to Forge. Forge pushes fixes. Railway rebuilds. The loop continues.

Five to fifteen rounds is normal. The AI builds exactly what you asked for, which isn’t always what you meant. The gap between specification and intention is where most iteration happens. You learn to write tighter specs, but you never eliminate the gap entirely.

Phase 5: The Honest Bits

Let me be direct about the friction.

Sandboxing failures. Forge loses repository access multiple times per week. Policy issues, transient failures. Cass handles retry logic, but sometimes the agent is stuck and I step in.

Misunderstood specs. The AI is literal. If your spec says “show a list of items” but doesn’t specify empty states, you’ll get a blank space or an error. Not because the AI is bad. Because you were imprecise. The cost of imprecision is rework.

Context limits. Long sessions need checkpointing. Too many files or too much history and the agent loses track. We break work into smaller chunks, but that adds overhead.

It’s not no-code. I have thirty years of technical experience. We write tests first and have a QA process; I’m not reviewing every line manually. But I catch architectural mistakes before they ship because I know what to look for. Without that background, you’d ship broken things and not know until users complained.

A single code task can run for up to an hour. While Forge is building one feature, I’m working on the next: planning the spec, reviewing a different PR, or doing client work. When it finishes, I come back and do the QA. It’s asynchronous by design. My time isn’t spent watching code being written.

The compound engineering approach (brainstorm → plan → spec → build → review) is what makes this work. Skip the planning phase and the output is garbage. The process is the product.

Why It Still Works

Given all that friction, why bother?

Because the alternative is worse. I’ve worked with agencies. Six-week lead times. Discovery calls that discover nothing. Deliverables that miss the mark. Invoice arrives regardless.

With this workflow, I go from idea to shipped feature in days, not weeks. The iteration happens in real-time, with me in the loop, making calls based on actual usage rather than mockups. When something’s wrong, I fix it in the next round.

One technical founder plus this process beats a small team managed through tickets and stand-ups. Not because the AI is magic, it isn’t, but because the feedback loop is tight and context never gets lost in translation.

The Real Requirement

Here’s what nobody tells you: technical knowledge is still essential.

You can’t outsource your thinking to AI. You plan. You spec. You review and sign off. The AI implements. If you don’t know what good looks like, you won’t recognise bad when it arrives dressed in working code.

This isn’t a democratisation tool for non-technical founders. It’s a leverage tool for technical founders to ship more software, faster, with higher quality.

The planning phase takes longer than the coding. The review phase takes longer than you want. Agents fail, specs get misunderstood, context limits force you to chunk work. But when it clicks, when a tight spec meets competent execution and a tight review loop, you ship features at a pace that feels like cheating.

It’s not magic. It’s just a different kind of engineering. One where the human does the thinking and the machine does the repeatable work. Which is exactly what AI is supposed to be for.

What’s Next

The current setup works, but there’s friction I want to remove. The next step is tightening the GitHub Projects to Claude Code pipeline, moving execution to GitHub Actions runners, which can still run on my own machine or on OpenClaw, but removes the orchestration overhead.

Once the workflow lives in GitHub’s native infrastructure, it becomes agent-agnostic. The same process works with whatever ships next: Hermes, Claude Cowork, or something that doesn’t exist yet. The spec, the issues, the review loop, the QA process: none of that changes. Only the execution layer swaps out.

That’s the point of building around a process rather than a tool. Tools change every six months. A good process outlasts all of them.

The compound engineering philosophy is what made this shift possible. Once the brainstorm-plan-build-review loop was tight enough, I stopped being bottlenecked on a single project. Now I work across multiple projects and tasks simultaneously, while one feature is being built, I’m planning the next, reviewing another, or doing client work. The system compounds because every cycle makes the next one faster.

Sources and Further Reading

- Compound Engineering: the AI-native engineering philosophy behind the brainstorm-plan-build-review loop

- Anthropic Economic Index (November 2025): AI reduces development task time by 80% on average; Claude Code achieves 79% automation vs 49% for chat-based AI

- Stack Overflow Developer Survey 2025: 76% of developers now use or plan to use AI coding assistants

- Andrej Karpathy on “vibe coding” (February 2025): the exploratory approach to AI-assisted coding, vs the structured workflow described here

- OpenClaw: the AI agent platform used for orchestration, memory, and agent management

- Claude Code: Anthropic’s agentic coding tool used by Forge for implementation

- GitHub Projects: kanban board for tracking issues through the pipeline

Frequently asked questions

01How does AI-assisted software development actually work in practice?

How does AI-assisted software development actually work in practice?

The workflow involves five phases: writing a detailed specification (planning), breaking it into GitHub issues, running sandboxed Claude Code instances against individual issues (building), reviewing outputs over 5-15 iteration cycles, and shipping. The planning phase typically takes longer than the coding itself.

02What is OpenClaw?

What is OpenClaw?

OpenClaw is a harness system that wraps Claude Code to give AI agents persistent memory, project context, and structured workflows. It prevents the agent from starting each session blind and enforces working conventions that keep multi-session projects coherent over time.

03What makes a good specification for AI-assisted coding?

What makes a good specification for AI-assisted coding?

A good spec defines the exact behaviour expected, the acceptance criteria, the files the agent should touch, and what it should not change. Vague specs produce vague outputs; specific specs with examples produce shippable code that needs fewer revision cycles.

04Is technical knowledge still required when building software with AI agents?

Is technical knowledge still required when building software with AI agents?

Yes. The article is explicit that technical knowledge remains essential for writing accurate specs, reviewing outputs, catching incorrect assumptions, and understanding when an agent has gone off course. AI speeds up the execution but does not replace the engineering judgment required to direct it.